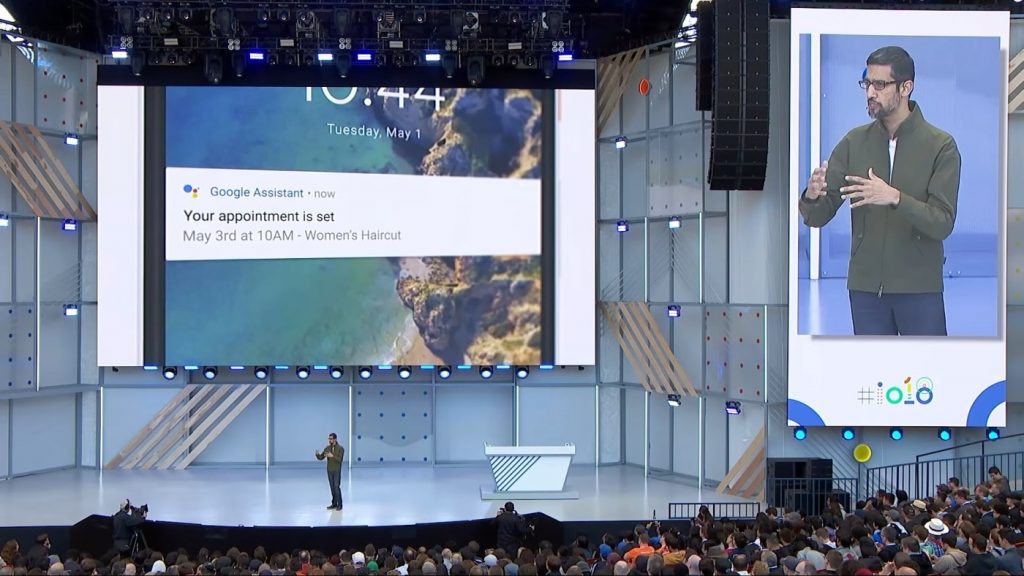

Google Duplex System – The AI That Books a Table for You and Sounds Human

Google’s I/O conference came as a total surprise – the company impressed the public with its Duplex System, the AI that can make a phone call and book a haircut for you

The Duplex system proved how easy it is to make a phone call and book a haircut or restaurant table without having to call yourself. Even more, this was a phone call with a twist: the persons at the other end of the line did not notice that they were talking to the Google Assistant.

The AI successfully asked the right questions, at the right time, and even added our natural “mhhm” that we instinctively say from time to time in a conversation, making the discussion run smoothly.

The crowd was in shock and saw it as a huge technological achievement. However, it also opens the Pandora’s box of ethical and social challenges.

Does Google have an obligation to let people know that they are talking to an AI? Does this sort of advancement erode our trust in what we hear?

At the conference, Google explained that the system can only converse in “closed domains” – exchanges that are functional. This means that, at least for now, the Google Assistant won’t be able to discuss with your friends about different events or share any sort of information to them regarding your activities and personal life.

If closely listening to the conversation that the AI carried with the two employees (restaurant and hair salon), we can notice that the robot has some tricks that work. Even though the AI was able to navigate a series of misunderstandings, it did so due to tricks such as rephrasing and repeating questions. What truly helped was the tone of the voice, intonation and humanly-like voice that the company implemented.

Even though snippets of the conversations seem to prove real intelligence, when analyzed they reveal programmed habits.

It is important to understand that the company is currently calling Duplex an “experiment.” This means that is far from being a finished product, so we must wait and see what happens next.

Source: theverge.com